Project: Data-Science Framework Development

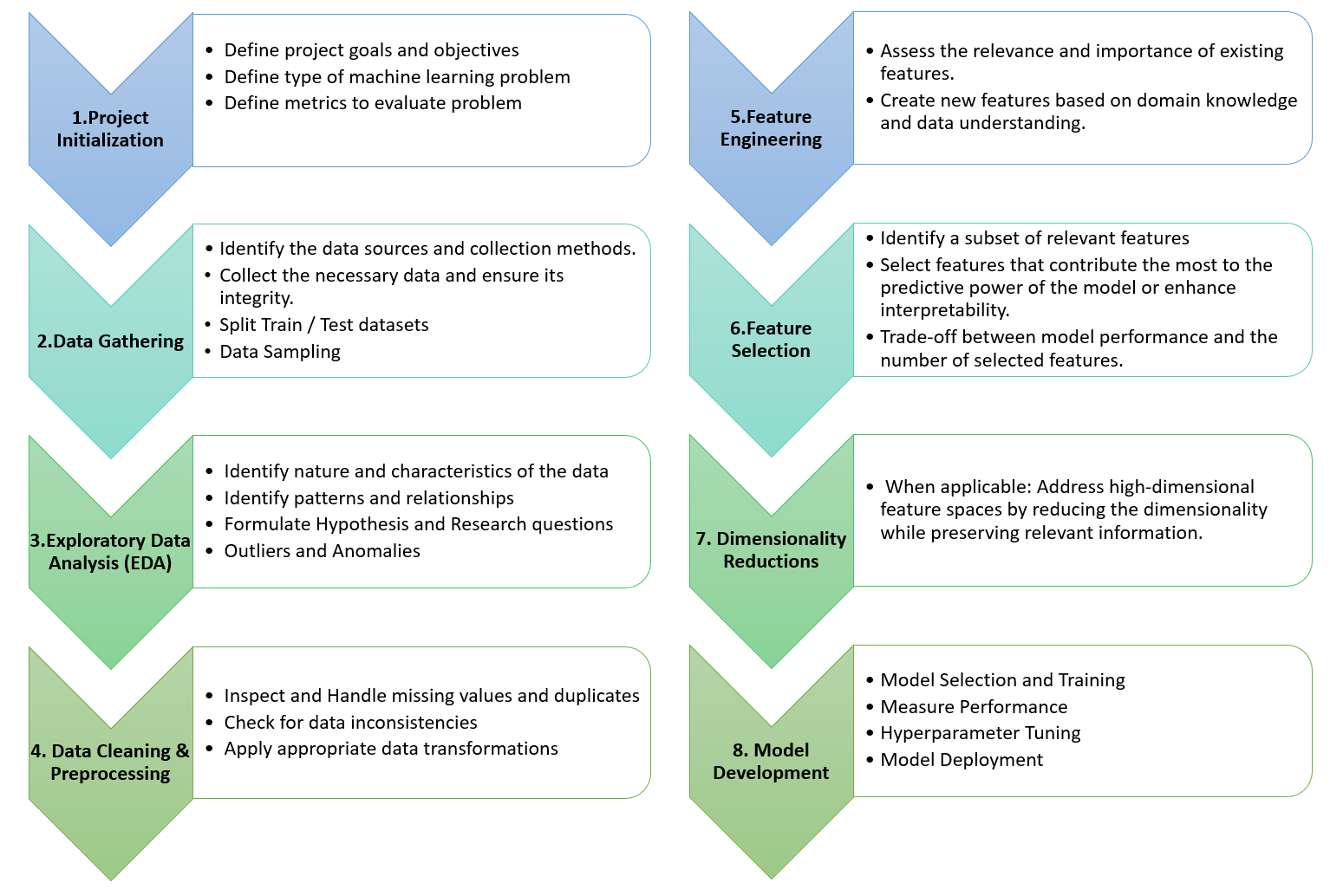

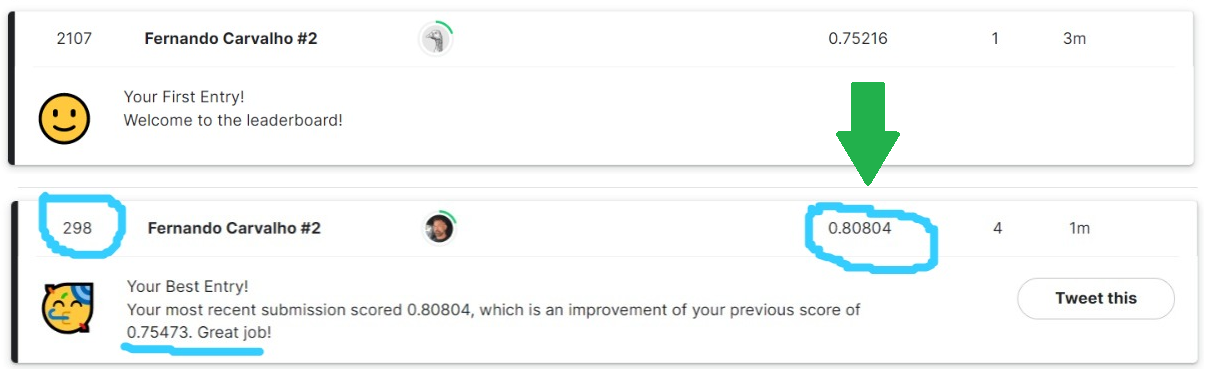

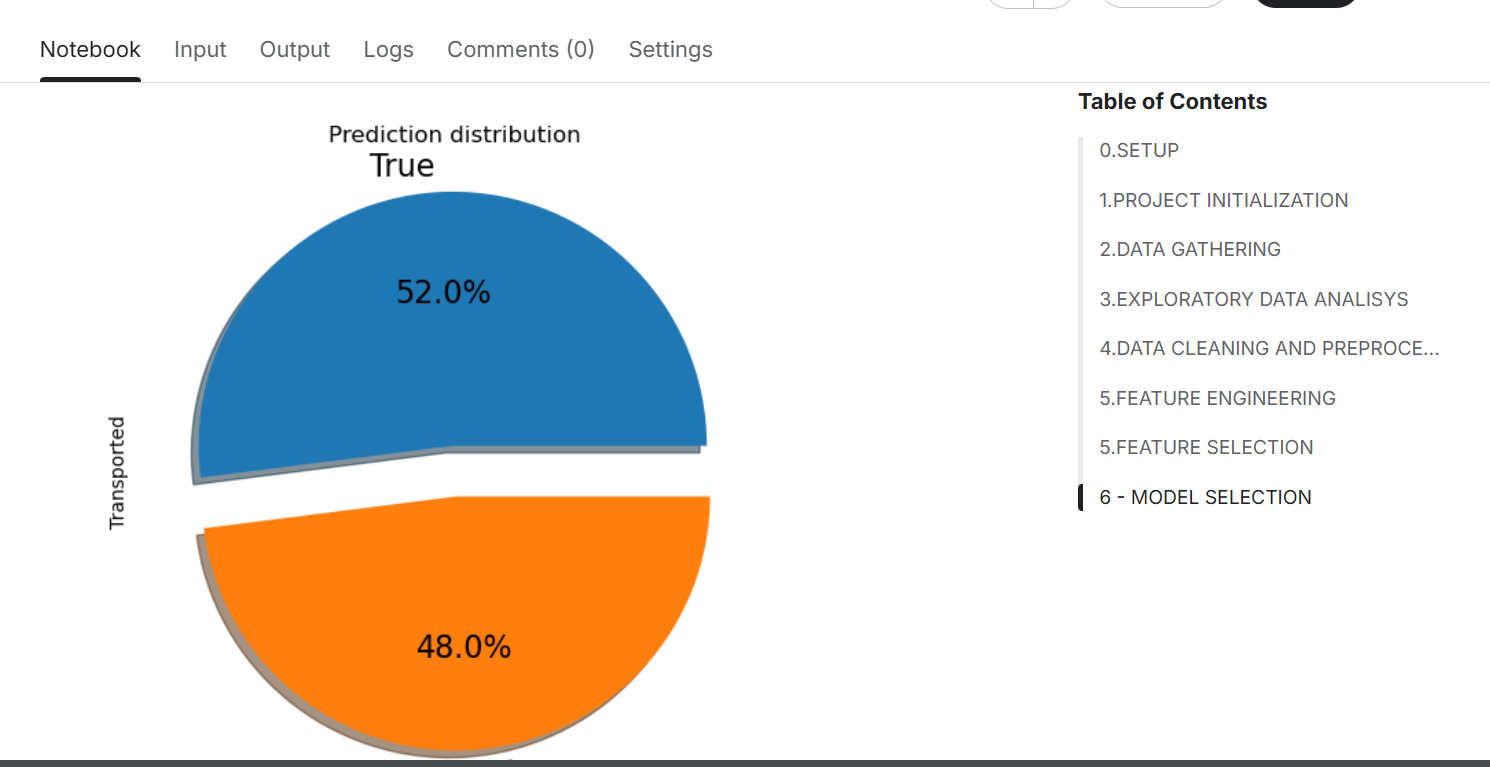

This project was developed as part of a self-guided, project-based learning approach to mastering the data science lifecycle. The goal was to solve the Kaggle Spaceship Titanic classification problem, applying the full data science workflow—from data cleaning and feature engineering to model selection and evaluation—using real-world techniques. The final deliverable is a Jupyter Notebook demonstrating an end-to-end solution, including the creation of a reusable data science project framework.

https://youtu.be/VsivL6R1eAs

Take a look inside

Technologies Used

Python:

- Data Manipulation: numpy,pandas

- Data Visualization: matplotlib, seaborn, missingno, tabulate

- Model, pipeline and evaluation: scikit-learn, category_encoders

- Statistics: scipy

- Platform: Kaggle

Project Links

Motivation

The project was initiated to:

-

Learn data science through hands-on, real-world problem-solving

-

Understand the complete data science pipeline, including EDA, modeling, and evaluation

-

Develop and formalize a personal framework to apply to future projects

Challenges & Learninigs

-

Process Structuring: One of the biggest challenges was understanding and sequencing the steps in a data science project. Overcoming this helped create a reusable framework for future projects.

-

Feature Engineering: Identifying meaningful features in a fictional dataset required creativity, experimentation, and iteration.

-

Model Optimization: Learning how to fine-tune models and evaluate performance using cross-validation techniques was a key technical milestone.

-

Self-Guided Learning Discipline: Without formal guidance, managing resources (YouTube, books, ChatGPT, etc.) and adapting to unexpected problems was an exercise in self-reliance and growth.

-

Critical Thinking & Domain Understanding: Practiced translating vague problem definitions into measurable modeling objectives—a key data science skill.