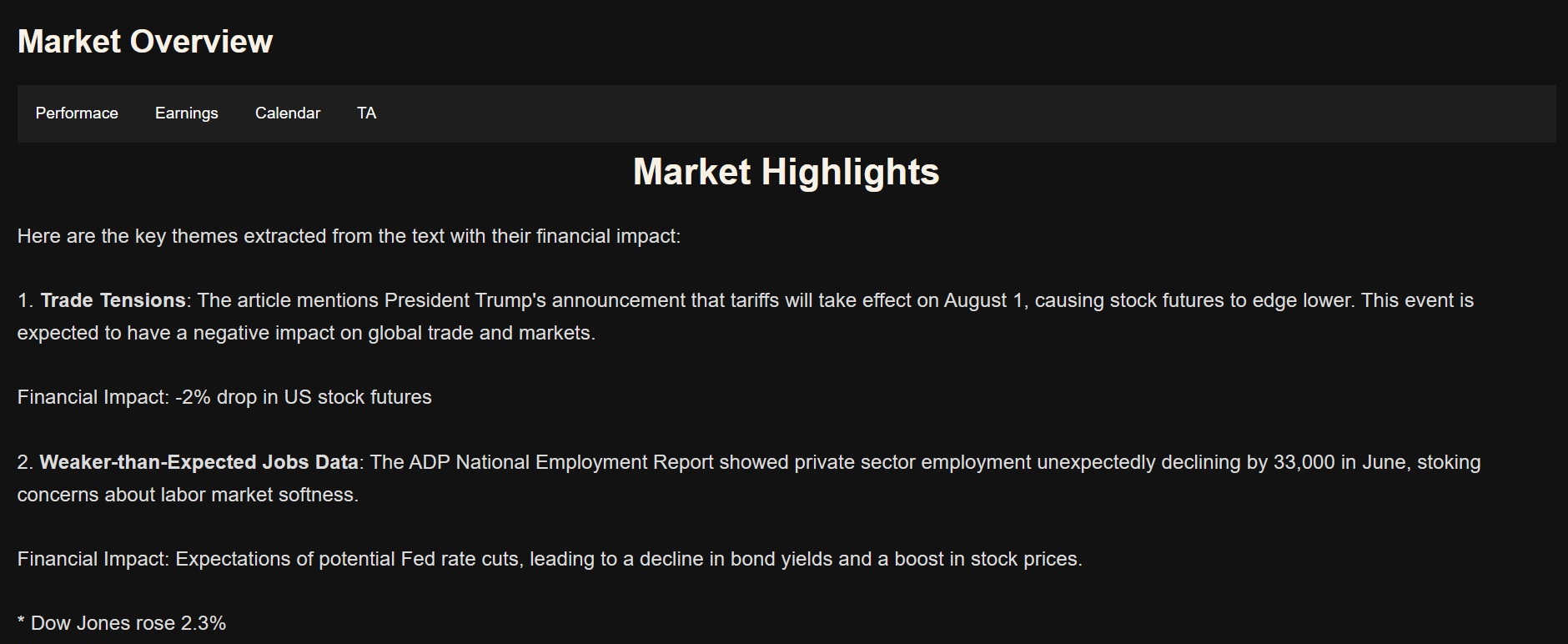

Project: Overall Market Overview

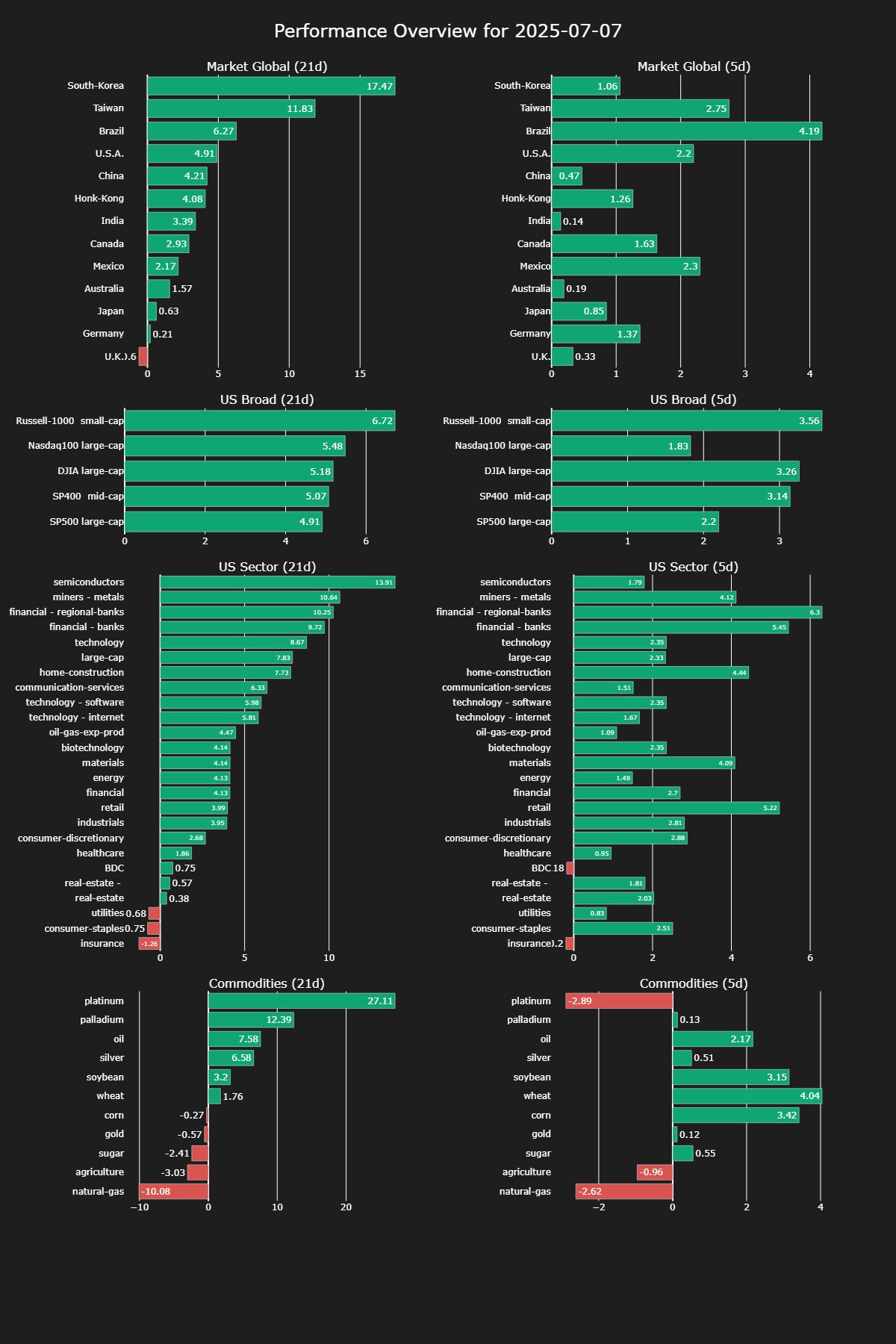

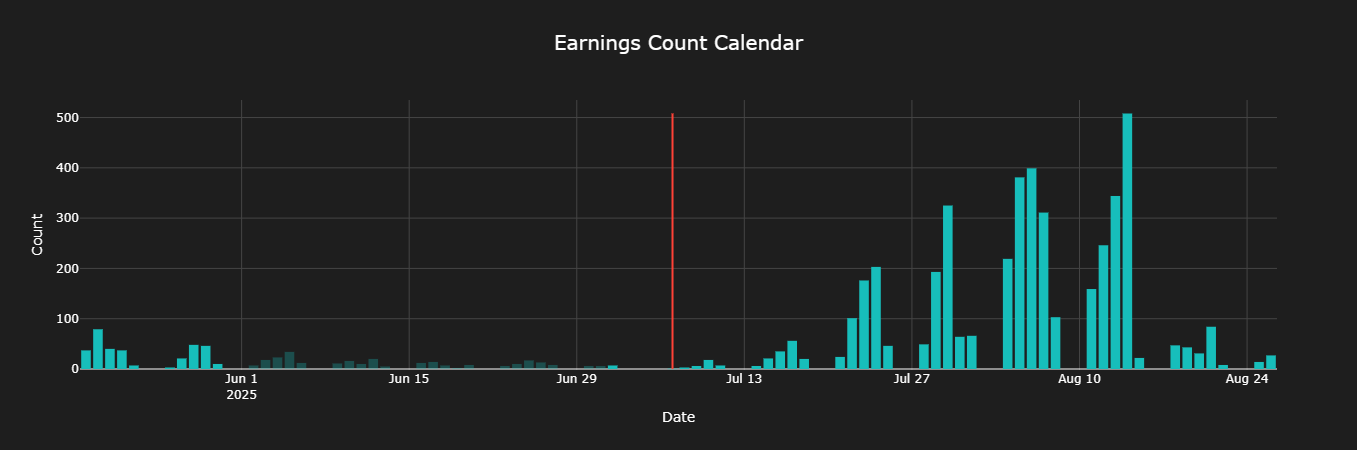

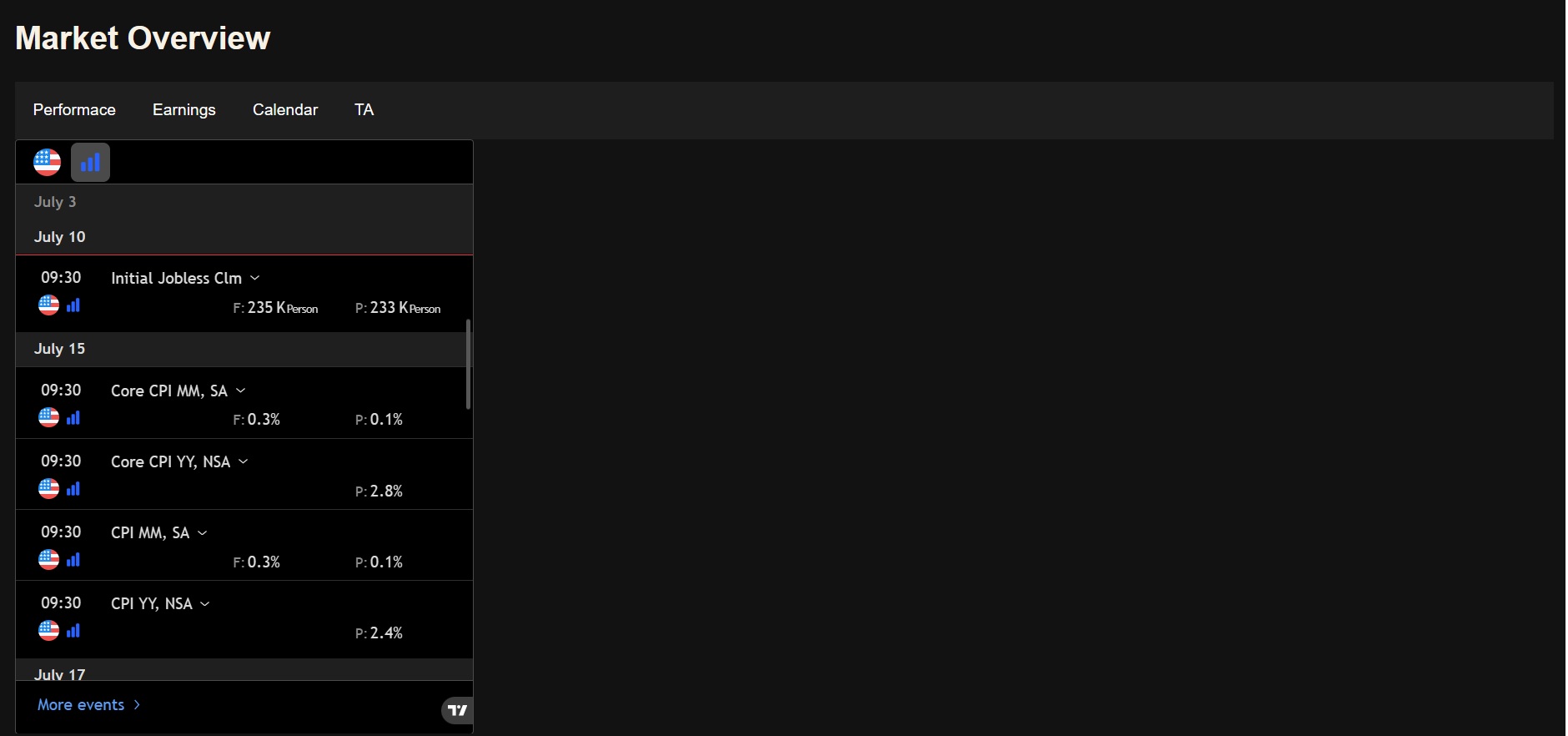

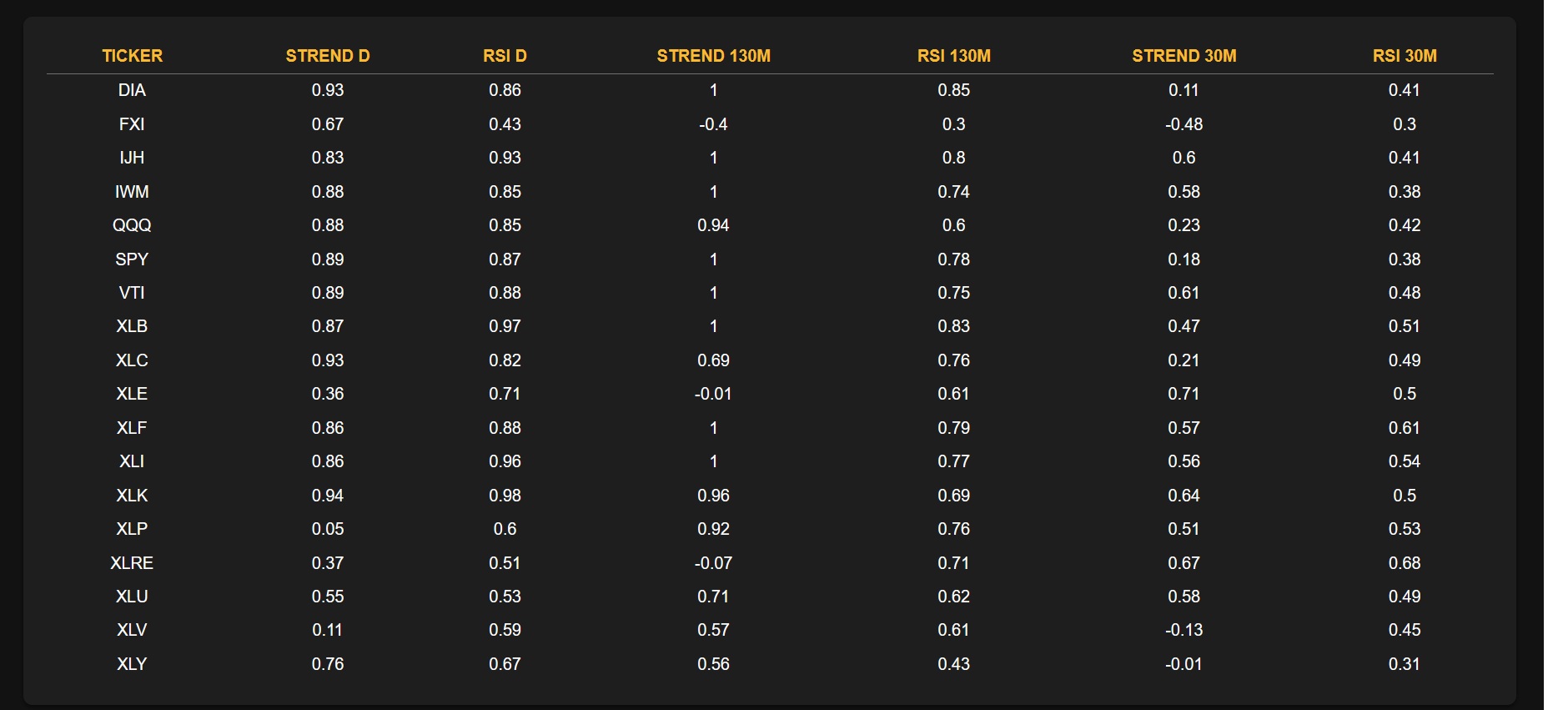

This application aims to deliver a comprehensive market overview by integrating end-of-day market performance, key news events, actionable sector trade ideas, a detailed economic and earninigs calendars, and multi-timeframe trend analysis across markets and sectors. Its goal is to provide users with the context needed to make informed trading decisions in the trading session that is about to start.

https://youtu.be/bG2yZtRMJ1c

Take a look inside

Technologies Used

Python:

- Front-end: flask (with HTML & Javascripts)

- Back-end: numpy,pandas, ollama,requests, httpx

- Data Visualization: plotly

- LLM Models: nomic-embed-text, llama3.2:3b

Motivation

I created this project to help me establish a clear understanding of the market context before the trading day begins. By identifying what’s driving market movement, it provides a structured framework that helps me narrow down the most promising sectors and stocks. This foundation streamlines the process of identifying high-probability day trading opportunities later in the workflow.

Challenges & Learninigs

ETL (Extract, Transform, Load) Challenges

-

Data Collection from Multiple Sources: Aggregating data from various websites and APIs was one of the most time-consuming parts. Normalizing and formatting inconsistent data into a usable structure required extensive preprocessing and validation logic.

-

Storage Strategy: Since long-term data retention wasn’t required, I opted for a lightweight, file-based approach using CSVs. This simplified deployment and made the application faster to prototype and iterate.

-

Incremental Updates & Rate Limits: Some data, especially news and calendar updates, needed to be processed incrementally. I had to develop custom solutions to handle partial updates efficiently while working around rate limits and scraping restrictions from multiple web sources.

LLM Integration Challenges

-

Large-Scale News Processing: With a high volume of news articles to process daily, I implemented an embedding pipeline to convert articles into vector representations before querying the LLM, ensuring better relevance and reduced input size.

-

Prompt Engineering: Refining prompts was critical to extract accurate summaries and insights. I iterated multiple prompt strategies for different use cases (e.g., summarization vs. ideation).

-

Temperature Tuning for Use Cases: I fine-tuned LLM parameters depending on the task—lower creativity for factual extraction and summarization, higher creativity for generating trade ideas based on sector trends and news sentiment.

Key Technical Learnings

-

Flask (Backend): Built a lightweight and modular web server using Flask, allowing for rapid API development and data orchestration.

-

Plotly (Visualization): Significantly improved my skills in creating interactive and responsive charts for multi-timeframe trend analysis and market visualizations.

-

Local LLM Deployment: Set up and optimized a local LLM using Ollama, evaluating multiple models for different tasks. I also handled embedding workflows to ensure context-aware responses for news summarization and analysis.

-

JavaScript for Front-End Enhancements: Used JavaScript to format dynamic content, improve UI responsiveness, and integrate third-party widgets.

-

LLM as a Coding Assistant: Leveraged language models to accelerate development. I learned how to interact effectively with LLMs to solve specific problems, debug faster, and generate boilerplate code, increasing my development efficiency.

-

TradingView Widget Integration: Successfully embedded external components such as TradingView widgets for real-time charting, enhancing the application’s functionality and user experience.